What is network stack as a service?

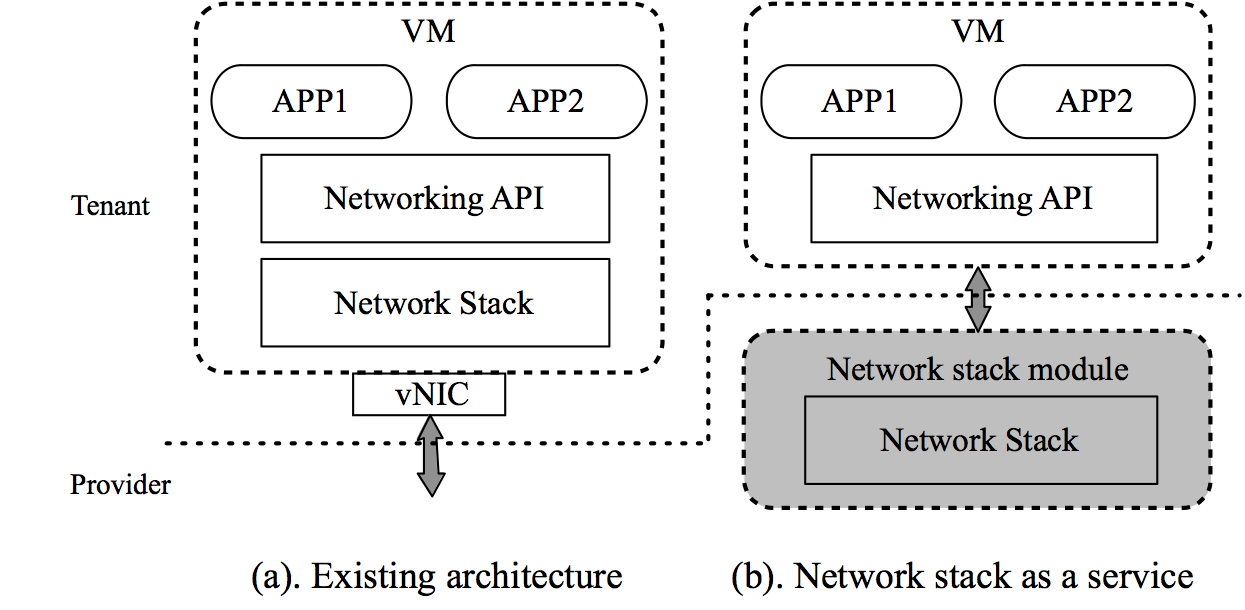

In today’s public clouds, the tenant network stack is implemented inside the virtual machines (VMs). This legacy architecture has two fundamental challenges. First, network stack deployment is unnecessarily difficult and innovation is stagnant as a result. Even though many new protocols (e.g. DCTCP, F-Stack) have been deployed in private settings, to our knowledge none of them has seen wide adoption in public clouds. Second, efficiency of providing networking is low. Provider is unable to efficiently utilize and manage her physical resources for networking. Providing performance guarantees remains extremely challenging, and network management is troublesome.

We address these challenges by advocating network stack as a service as a new paradigm to provision networking. We propose to use existing network APIs such as BSD sockets instead of vNIC as the abstraction boundary between application and infrastructure. This new separation of concern allows us to decouple the VM network stack from the guest OS, and offer it as an independent service by the provider. Packets are only handled outside the tenant VM in a network stack module (NSM) given by the provider, whose design and implementation are transparent to tenants. Each VM has its own NSM whose resources are dedicated to providing networking services.

Network stack as a service brings a number of benefits that are missing in today’s architecture. Deployment becomes easier and Flexible: Tenants now can deploy a stack without much effort. Further, as long as the applications use the socket API, they can use any stack independent of the guest kernel or the stack’s API, since all these complexities are encapsulated in the NSM. Efficiency can be greatly improved. By gaining control over the network stack, the provider can now offer meaningful SLAs and potentially charge tenants for them. It can also fully optimize the resource usage for networking, by say multiplexing different VMs to the same NSM to serve their traffic.

See a [dialogue between Luigi and Jennifer] published at ACM HotNets 2017 about the motivation and use cases of our idea.

NetKernel

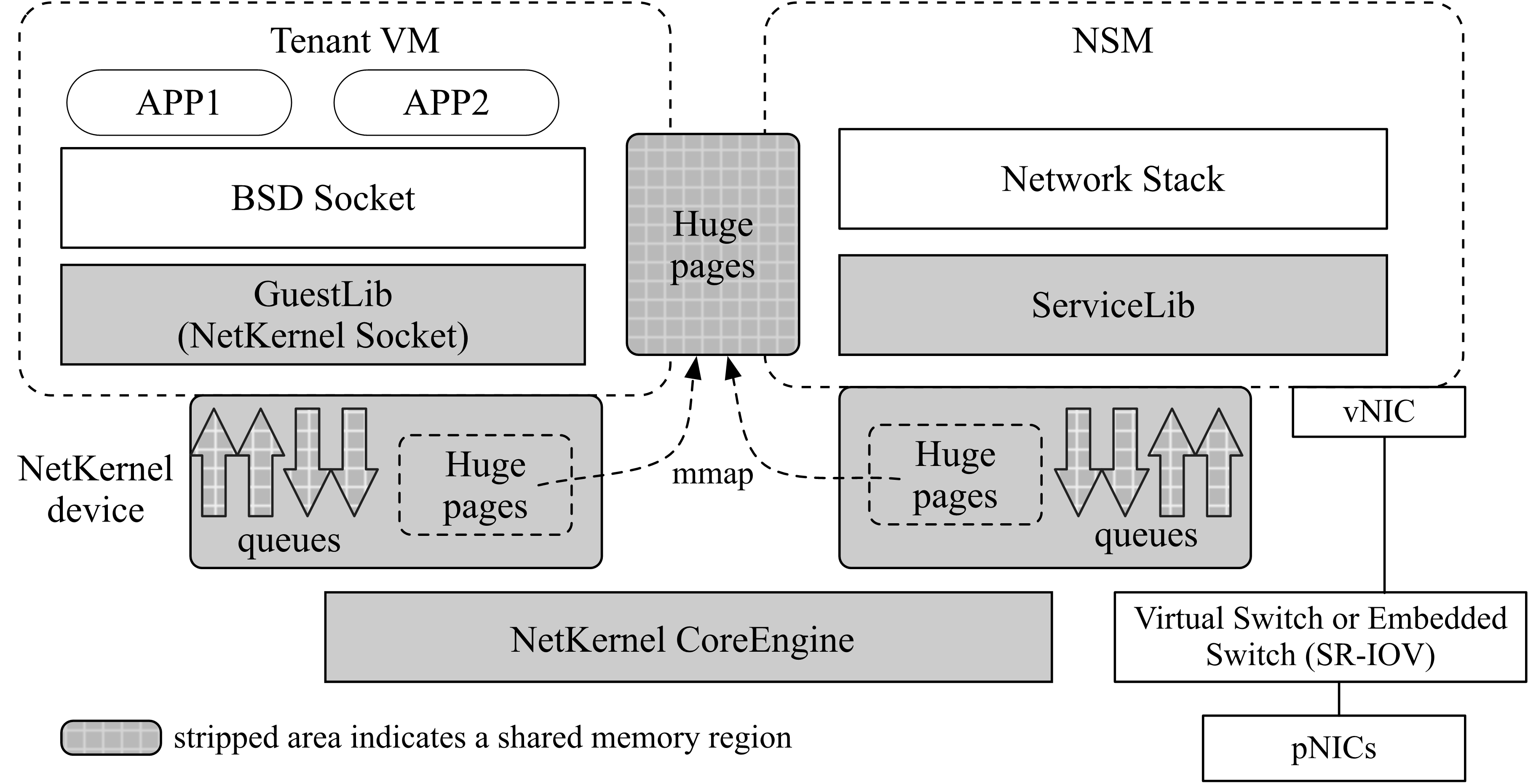

NetKernel separates the network stack from the guest without radical change to the tenant VM, so that it can be readily deployed. As depicted in above figure the BSD socket APIs are transparently redirected to a complete NetKernel socket implementation in GuestLib in the guest kernel.

The GuestLib can be readily deployed as a kernel patch and is the only change we make to the tenant VM. Network stacks are implemented by the provider on the same host as Network Stack Modules (NSMs), which are individual VMs. Inside the NSM, a ServiceLib interfaces with the network stack. The NSM connects to the overlay switch, be it a virtual switch or a hardware one, and then the pNICs. Thus our design also supports SR-IOV.

All socket operations and their results are translated into NetKernel Queue Elements (NQEs) by GuestLib and ServiceLib. It serves as the intermediate representation of semantics between the tenant VM and the network stack in the NSM. To facilitate NQE transmission, GuestLib and ServiceLib each has a NetKernel devices or NK device, consisting of one or more sets of lockless queues. Each queue set has a send queue and receive queue for operations with data transfer, and a job queue and completion queue for control operations without data transfer. Each NK device connects to a software switch called CoreEngine, which runs on the hypervisor and performs the actual communication between GuestLib and ServiceLib (CoreEngine can also be offloading on the hardware, e.g., FPGA, ASIC and SoC). A unique set of hugepages are shared between each VM-NSM tuple for application data exchange. A NK device also maintains a hugepage region that is memory mapped to the corresponding application hugepages.

Publications

NetKernel: Making Network Stack Part of the Virutalized Infrastructure [Paper] [Talk]

Zhixiong Niu, Hong Xu, Peng Cheng, Qiang Su, Yongqiang Xiong, Tao Wang, Dongsu Han, Keith Winstein

USENIX ATC 2020.

Network Stack as a Service in the Cloud [Paper] [Talk]

Zhixiong Niu, Hong Xu, Dongsu Han, Peng Cheng, Yongqiang Xiong, Guo Chen, Keith Winstein

ACM HotNets 2017.

There is an OvS Orbit interview Henry did with Ben Pfaff about NetKernel, which can be found here.

Artifact

Contact

Contact Henry, Zhixiong or Qiang for any questions/suggestions.